Market data is the lifeblood of modern financial innovation – from quant trading strategies and algorithmic execution to portfolio risk models and AI-driven analytics. Companies delivering real-time and historical market data via APIs are enabling quantitative teams, fintech innovators, and institutional investors to build faster and trade smarter by tapping into normalized feeds from dozens of trading venues.

Yet beneath the surface of high-performance APIs lies a critical operational dilemma that many data consumers – and even data providers – overlook:

Market data isn’t just big – it’s heterogeneous, fragmented, and constantly evolving.

Raw market feeds come from dozens of venues, formats, timestamps, symbology systems, and protocols.

Every exchange, instrument type, and product class can carry its own quirks and semantics.

Teams building production applications struggle to harmonize this into usable, analytics-ready data.

Even the most sophisticated engineering teams find themselves overwhelmed by:

• Normalizing symbols across venues

• Tagging and enriching tick-level events

• Reconciling inconsistencies between live feeds and historical archives

• Tracking corporate actions, splits, and adjustments

• Integrating reference data with pricing and order book feeds

• Creating reproducible workflows for backtesting, research, and analytics

This operational chaos silently erodes productivity, slows innovation, burdens engineering resources, and pushes high-value talent into tedious data wrangling – the exact opposite of what fintech and quant teams are hired to do.

The Hidden Cost: Lost Insights & Slowed Innovation

When data teams spend weeks wrangling feeds and troubleshooting edge cases instead of building strategy, the business impact is real:

Delayed strategy deployment

Inconsistent analytical outputs

Risk of silent errors in production systems

Missed signals in trading and research workflows

Elevated operational costs without business leverage

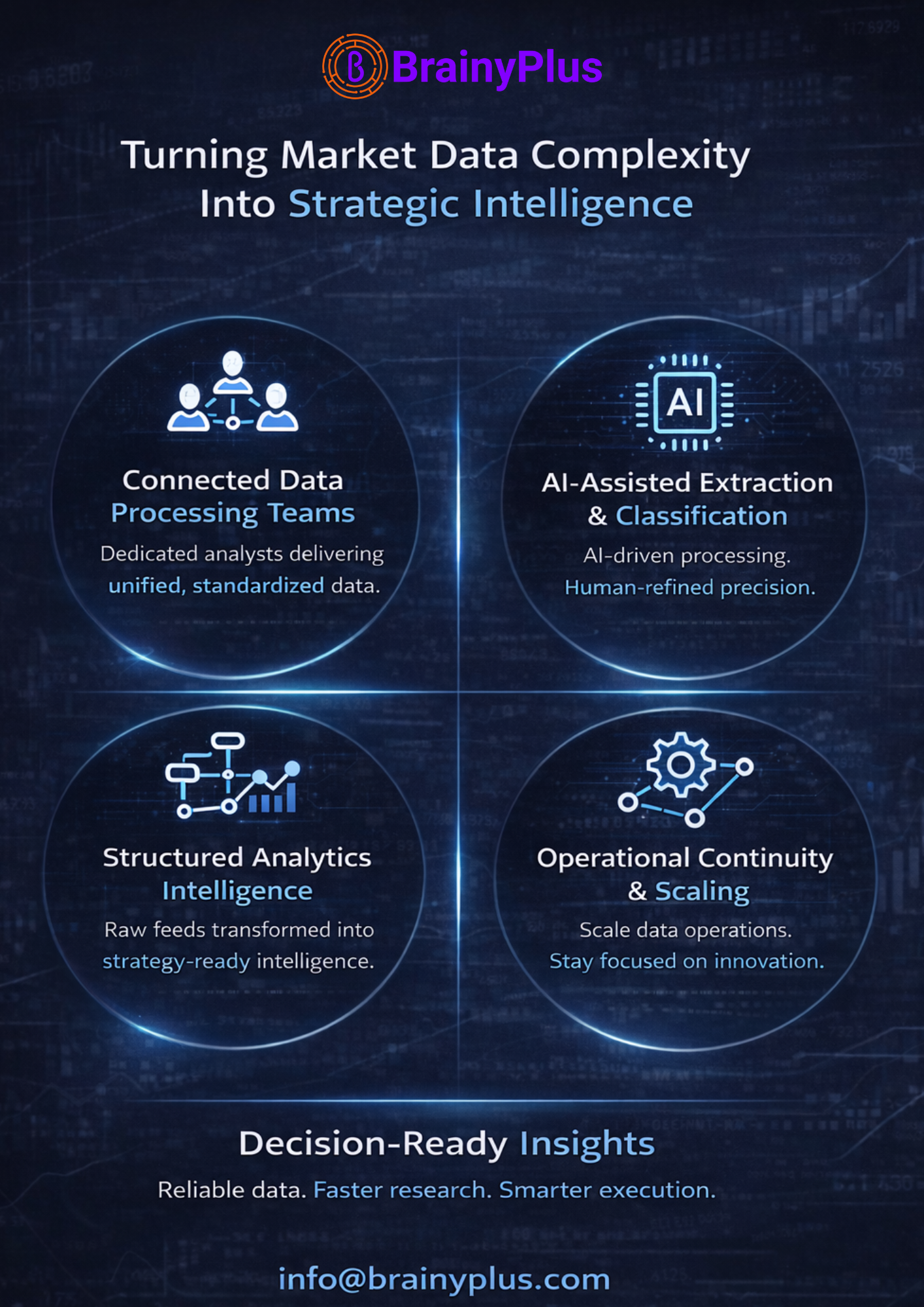

How BrainyPlus Solves This with a Continuous Virtual Data Processing Network

BrainyPlus helps financial data platforms and their customers unlock hidden value by operationalizing continuous data processing through an AI-augmented, Human-in-the-Loop (HIL) model:

Connected Data Processing Teams: Dedicated analysts work with your engineering pipelines — normalizing feeds, standardizing symbology, tagging events, and merging reference data into unified schemas.

AI-Assisted Extraction & Classification: We automate data parsing, format conversion, and event tagging — while human analysts validate and refine outputs for accuracy and strategic context.

Structured Analytics Intelligence: Instead of raw bytes, clients get analytics-ready datasets — instrument histories, normalized order book sequences, timestamp harmonization, and corporate event integrations with business logic applied.

Operational Continuity & Scaling: As new datasets are onboarded or venues expand, BrainyPlus maintains the workflows — eliminating bottlenecks and keeping your teams focused on innovation and product differentiation.

Decision-Ready Insights: With structured market data, research teams build models faster, quants iterate strategies reliably, and product teams deliver high-performance analytics features without firefighting data inconsistencies.

Business Impact You Can Measure

By partnering with BrainyPlus:

• Engineering resources shift from data cleanup to strategy deployment

• Research cycles accelerate with higher-quality data

• Product roadmaps progress without data bottlenecks

• Data quality becomes a competitive advantage

If your business depends on high-fidelity market data – but internal teams are stretched between engineering deliverables and data standardization – BrainyPlus offers a virtual data processing backbone that frees your people to focus on innovation and growth.

#MarketData #AIWorkflows #HumanInTheLoop #DataOps #FinancialTech #BusinessGrowth